Mobile testing has always been hard. You are dealing with hundreds of device and OS combinations, unpredictable network conditions, and users who interact with your app in ways no QA script ever anticipated. Traditional approaches, whether manual testing, scripted automation, or record-and-replay tools, were built for a more predictable world.

Agentic mobile testing tools change that equation, and the market for these tools has grown fast. Rather than executing a fixed set of instructions, agentic tools use AI to autonomously generate test cases, adapt to UI changes, prioritize coverage across device configurations, and in some cases, connect what happens in testing to what happens in production. The result is faster test cycles, lower maintenance overhead, and meaningfully better coverage.

We’ve assessed each agentic mobile testing tool across the dimensions that matter most for mobile teams: depth of agentic capability, real device coverage, test maintenance overhead, CI/CD integration, and practical fit for different team sizes and use cases.

The Top Agentic Mobile Testing Tools

Not all agentic mobile testing tools are built the same way, and the differences matter more than the marketing suggests. Some platforms started as real device clouds and have layered AI capabilities on top. Others were built from the ground up as purpose-built agentic mobile testing tools. And one, Luciq, goes a step further by combining agentic mobile testing with agentic observability in a single platform.

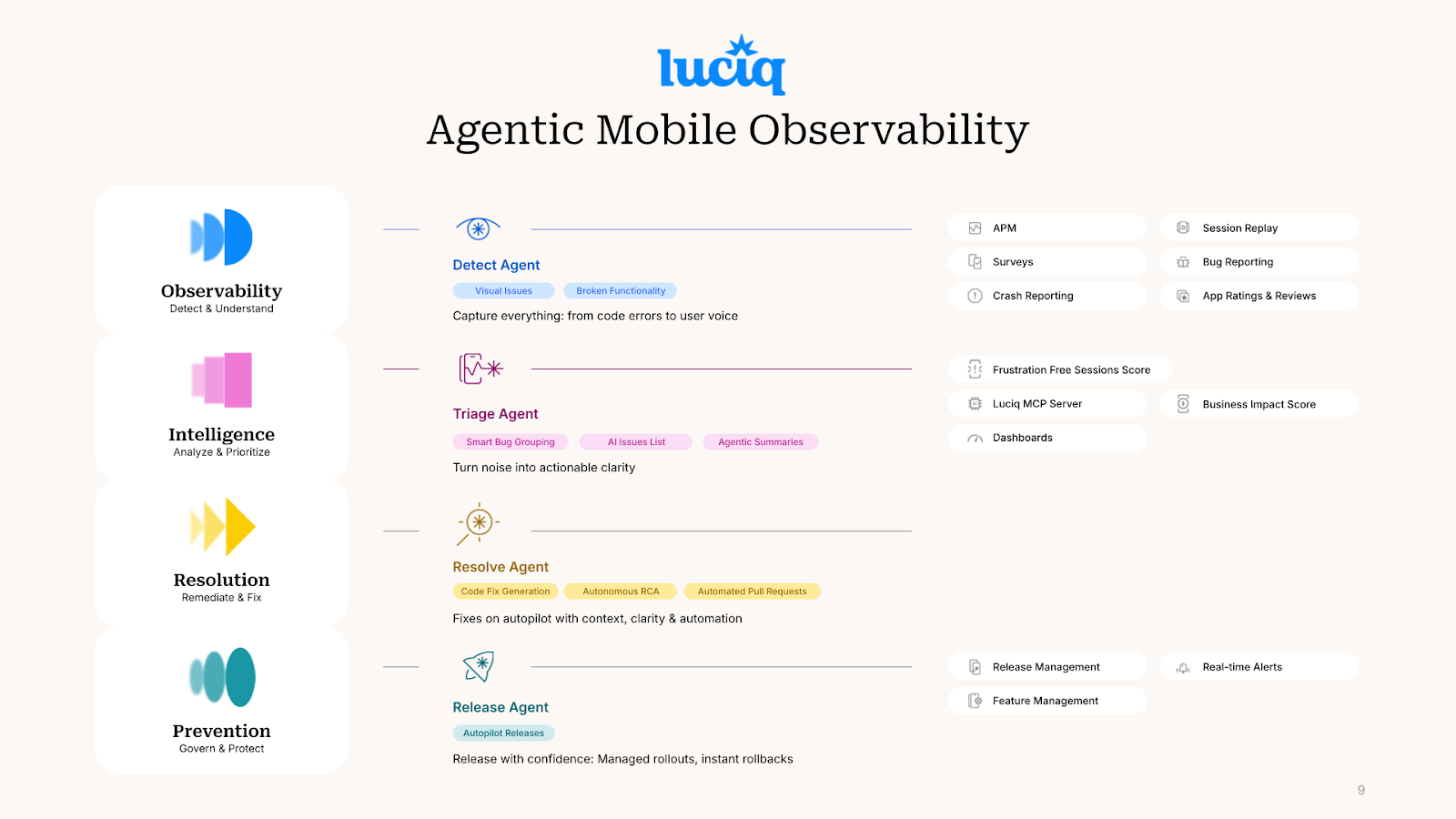

Luciq

Luciq is the only platform that combines agentic mobile testing tools with agentic mobile observability under one roof. It autonomously generates test cases, adapts to UI changes without manual test updates, and prioritizes coverage across the device and OS combinations that matter most for your user base. On the observability side, it continuously correlates signals across crashes, performance metrics, network events, and user sessions to surface root causes without engineers having to dig through dashboards.

The result is a feedback loop that most mobile teams do not have: test coverage informs which production signals to prioritize, and production signals inform where test coverage needs to improve. In the age of AI, that kind of closed loop between testing and observability is what separates teams that find issues before users do from those that find out when the reviews roll in.

Best for: Mobile engineering teams that want to consolidate testing and observability into a single agentic platform rather than stitching multiple disconnected tools together.

Kobiton

Kobiton's Autonomous testing layer explores your app independently, generates test cases based on what it discovers, and executes them across real devices without requiring engineers to write scripts upfront. This makes it particularly useful for teams that need broad test coverage quickly without the overhead of building and maintaining a manual test suite.

It also handles device selection intelligently, using usage data to prioritize the device and OS combinations most relevant to your user base rather than running tests across every possible configuration.

Best for: Teams that need autonomous test generation and real device coverage without investing heavily in scripted test infrastructure upfront.

Worth knowing: As an agentic mobile testing tool, Kobiton is strong on the testing side but does not extend into production observability. What happens after release is outside its scope, so plan to pair it with a separate monitoring solution for full coverage across the development cycle.

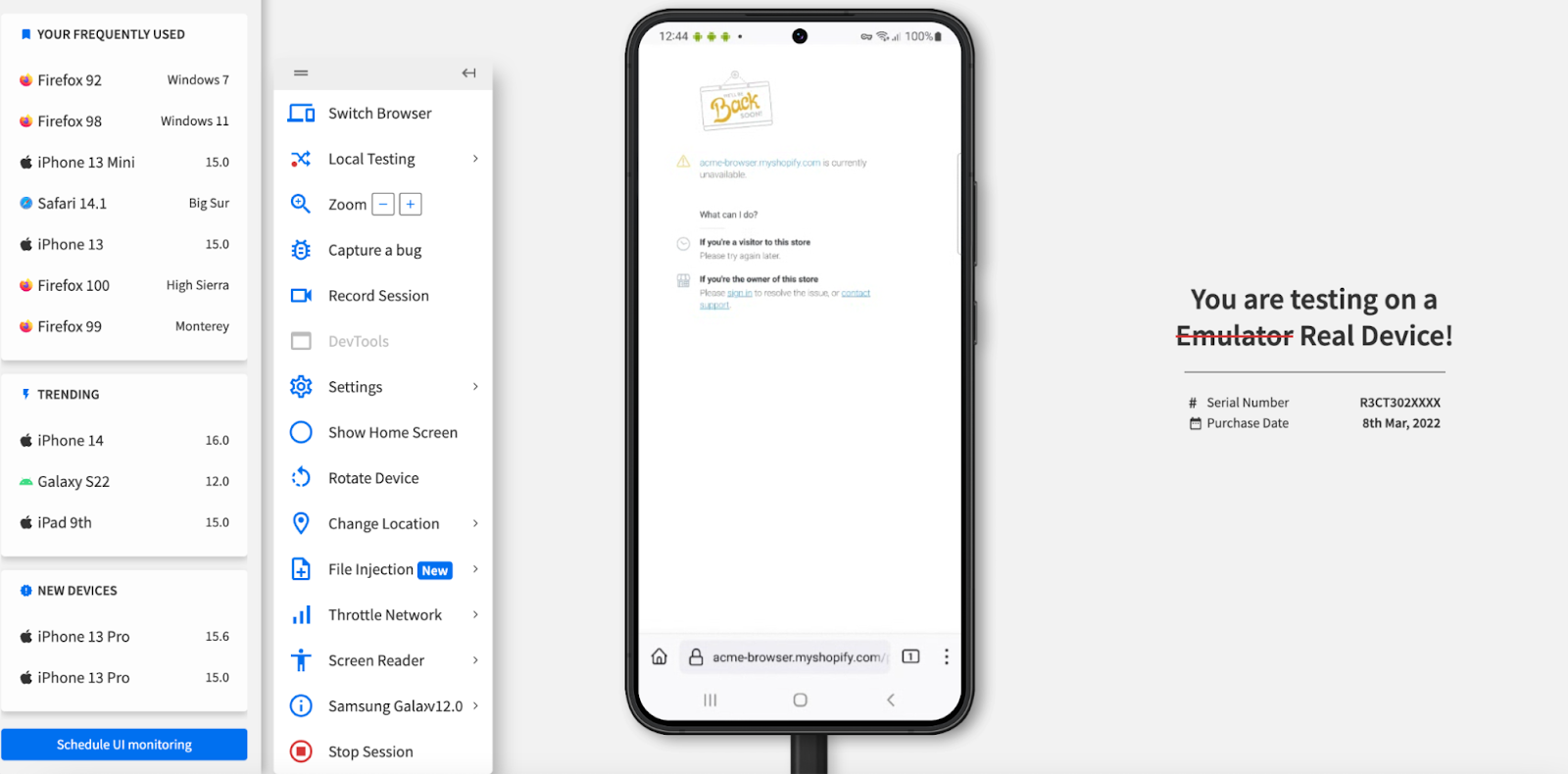

BrowserStack

BrowserStack is known for its real device cloud that gives teams access to thousands of device and OS combinations without managing physical hardware. It has since expanded beyond device infrastructure into AI-assisted testing through its Test Observability and Automate products.

Its AI capabilities focus on making test results more actionable. It automatically categorizes test failures, detects flaky tests, and surfaces patterns across test runs to help teams distinguish genuine regressions from environmental noise. This saves meaningful time during debugging, particularly for teams running large test suites across many device configurations.

Best for: Teams that need broad real device coverage and want AI-assisted failure analysis to make sense of results at scale, particularly those already using Selenium, Appium, or Playwright for test automation.

Worth knowing: BrowserStack's AI capabilities are more assistive than autonomous. It helps engineers work through test results faster but does not generate or maintain tests independently. Teams looking for genuinely agentic mobile testing tools will need to supplement it with additional tooling.

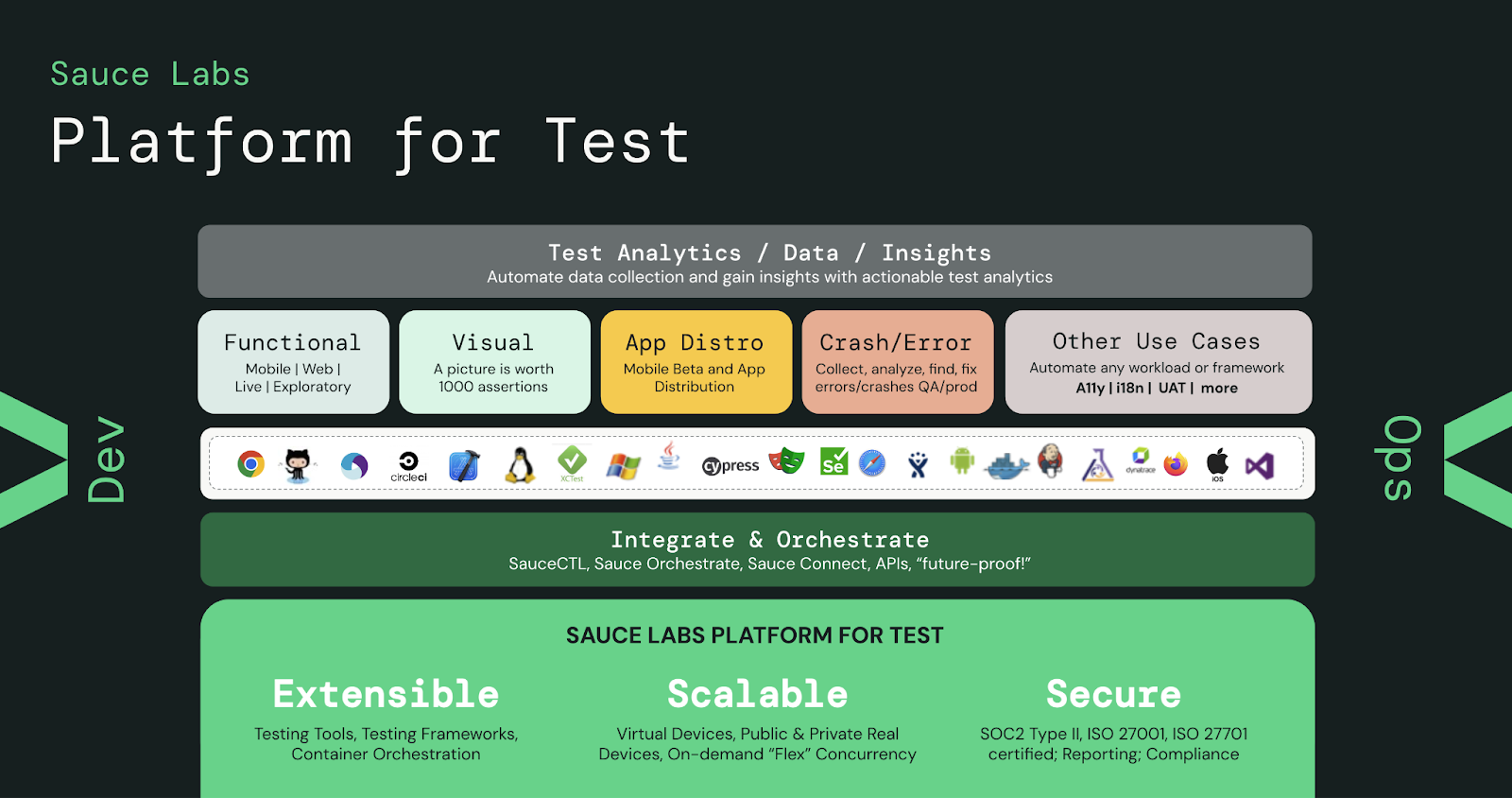

Sauce Labs

Sauce Labs has been a fixture in mobile testing for years, built around one of the largest real device clouds available. It gives teams access to a broad range of physical iOS and Android devices without managing hardware, and its testing infrastructure supports a wide range of automation frameworks including Appium, Espresso, and XCUITest.

Its AI capabilities center around error pattern detection and test analytics. Sauce Labs analyzes test results across runs, identifies recurring failure patterns, and helps teams prioritize what to investigate first. It also offers visual testing through its Screener integration for teams that need UI regression coverage alongside functional testing.

Best for: Enterprise teams running large, complex test suites across a wide range of devices who need reliable infrastructure and AI-assisted failure analysis to manage results at scale.

Worth knowing: Like BrowserStack, Sauce Labs is stronger on infrastructure and assisted analysis than autonomous test generation. It is a mature, well-documented platform but teams expecting agentic test creation out of the box will find it falls short of newer purpose-built tools in that area.

Applitools

Applitools takes a different approach to mobile testing than most platforms. Rather than focusing on functional test automation, it specializes in visual AI testing, using computer vision to detect UI regressions across devices, screen sizes, and OS versions automatically.

Its Visual AI engine establishes visual baselines for your app and flags deviations on subsequent test runs, distinguishing meaningful visual regressions from insignificant rendering differences that would otherwise generate noise. This is particularly valuable for mobile teams shipping frequent UI updates across a fragmented device landscape where manual visual review does not scale.

Best for: Teams that need reliable visual regression testing across a wide range of device and screen configurations, particularly those shipping frequent UI changes where catching visual regressions before release is a priority.

Worth knowing: Applitools is purpose-built for visual testing and does not cover functional test generation or execution on its own. It works best as a complement to an existing automation framework like Appium or Espresso rather than a standalone testing solution.

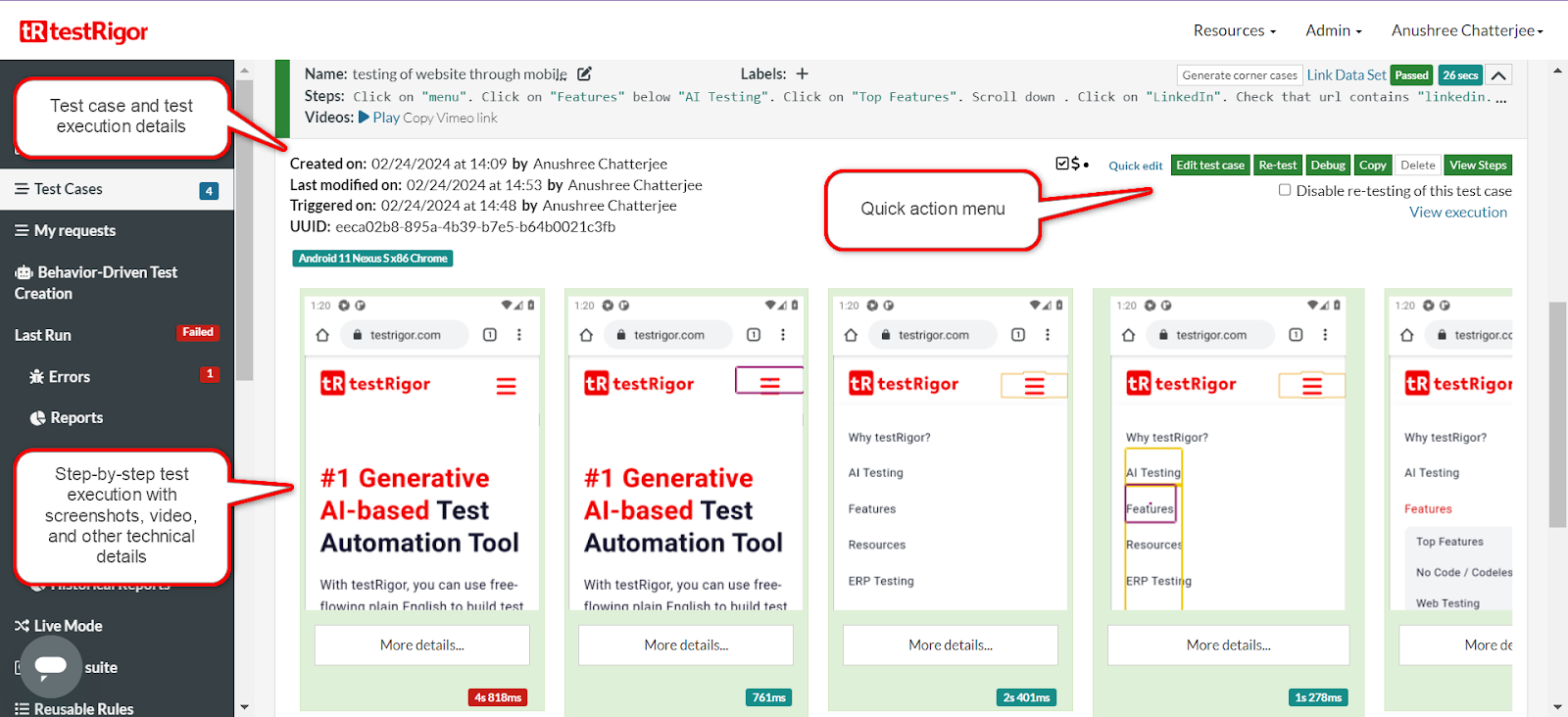

testRigor

testRigor removes the need to write test scripts entirely. Instead of code, tests are written in plain English, making it accessible to QA engineers and non-technical team members alike. Its AI interprets natural language instructions and translates them into executable test steps, handling the underlying automation logic automatically.

Its self-healing capabilities are also worth noting. When UI elements change, testRigor automatically updates affected tests rather than breaking them, which addresses one of the most persistent pain points in mobile test maintenance.

Best for: Teams that want broad test coverage without a heavy engineering investment in scripted automation, particularly those with mixed technical and non-technical QA contributors.

Worth knowing: testRigor's plain language approach makes it fast to get started but can limit precision for complex test scenarios that require fine-grained control over test logic. Teams with sophisticated automation requirements may find it too high-level for edge case coverage.

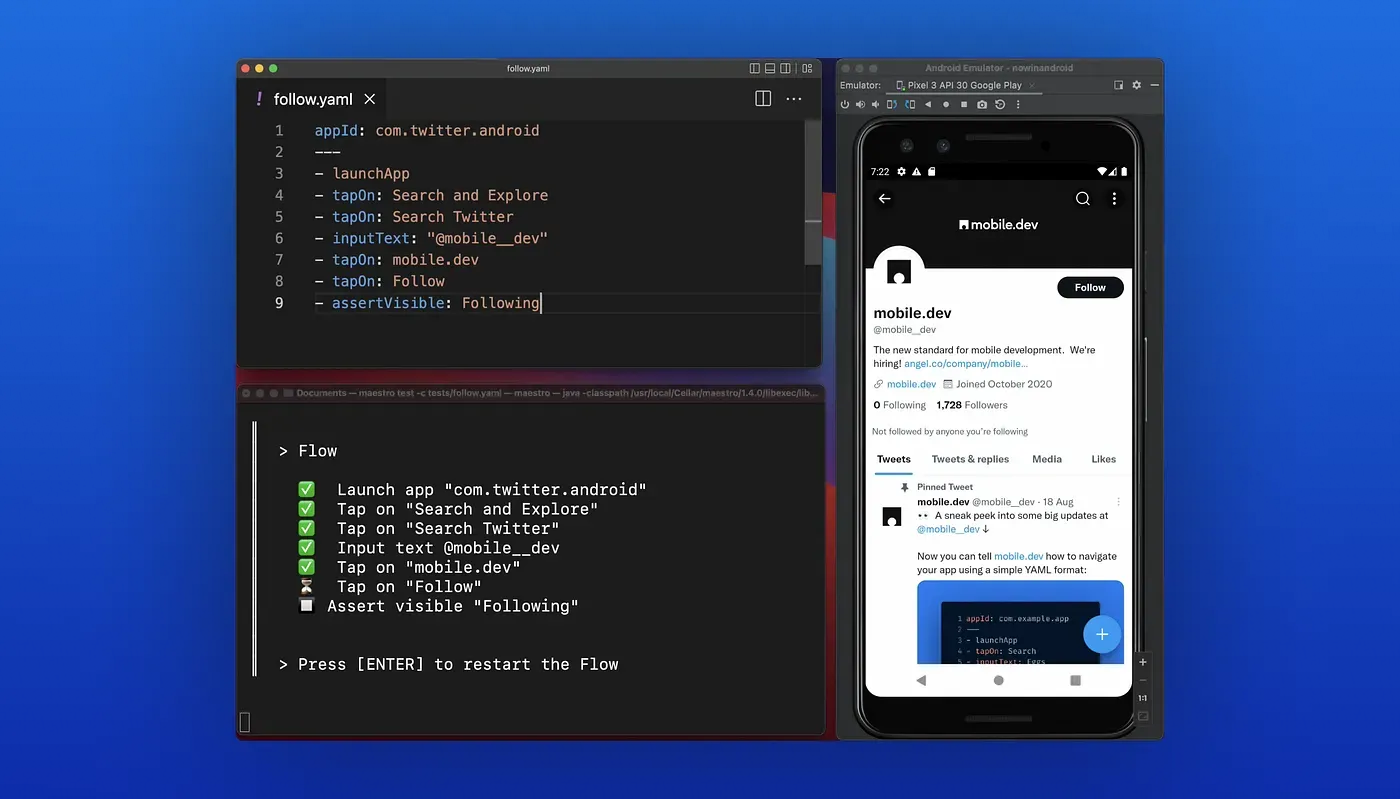

Maestro

Maestro is a developer-friendly mobile UI testing framework built around simplicity. Its YAML-based syntax makes writing test flows straightforward compared to more complex automation frameworks, and its setup time is significantly lower than tools like Appium. It has gained traction quickly among mobile developers who want reliable UI tests without a steep learning curve.

Its cloud offering extends local test execution to real devices, and it has begun integrating AI-assisted capabilities to help teams generate and debug test flows more efficiently.

Best for: Developer-led mobile teams that want a lightweight, fast-to-set-up testing framework with a low maintenance burden, particularly those working with React Native or Flutter apps.

Worth knowing: Maestro is still maturing as a platform. Its agentic capabilities are less developed than purpose-built AI testing tools, and teams with complex enterprise testing requirements may find it limited. It works best for teams that prioritize speed and simplicity over deep autonomous coverage.

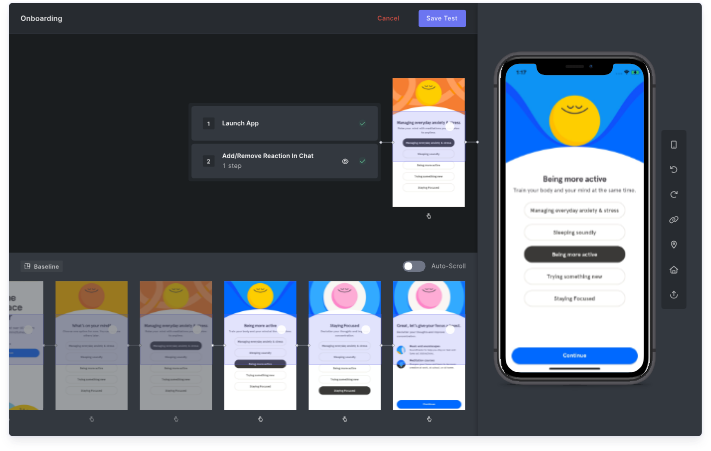

Waldo

Waldo is a no-code mobile testing platform designed to make test creation and maintenance accessible without writing automation scripts. Tests are built by recording interactions with your app, and its AI layer handles the maintenance side by automatically updating tests when UI elements change.

It also offers autonomous test generation, where Waldo explores your app and suggests additional test coverage based on user flows it identifies during exploration. This makes it useful for teams that want to expand coverage without manually authoring every test case from scratch.

Best for: Product and QA teams that need reliable mobile test coverage without dedicated automation engineers, particularly smaller teams where testing ownership is shared across roles.

Worth knowing: Waldo's no-code approach makes it fast to get started but can limit flexibility for teams with complex testing requirements. It covers core user flows well but may not be the right fit for teams that need deep, customizable control over test logic and execution.

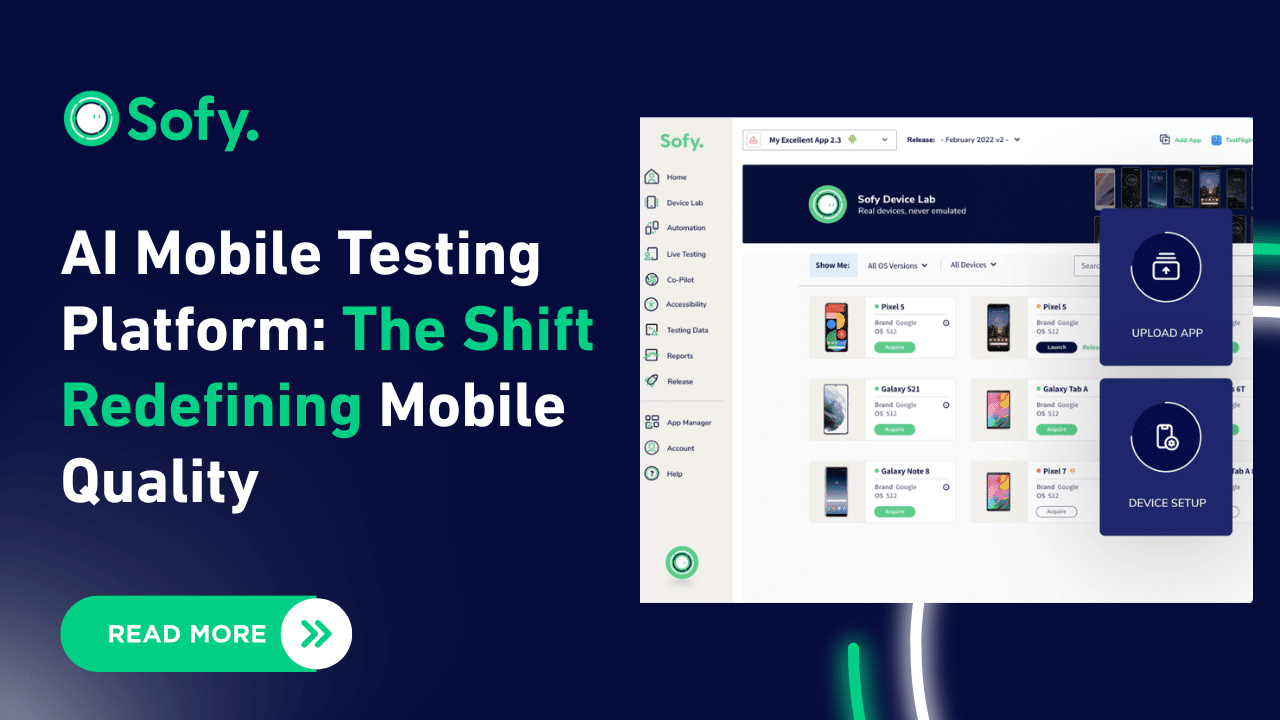

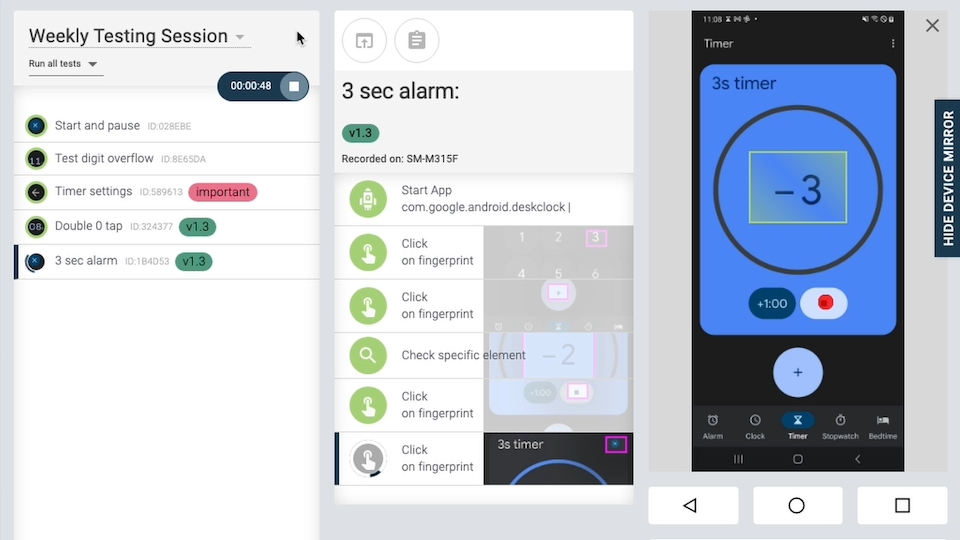

Sofy

Sofy is an AI-powered no-code mobile testing platform that combines test execution with a built-in real device cloud, removing the need to manage separate testing infrastructure. Its AI layer suggests test cases based on app exploration, executes them autonomously, and provides visual test results that make it easy to identify what failed and where.

It also includes built-in integrations with common project management and CI/CD tools, which helps teams fit it into existing workflows without significant setup overhead.

Best for: Small to mid-sized teams that want an accessible, self-contained mobile testing solution with real device coverage and AI-assisted test suggestions built in.

Worth knowing: Sofy is designed for accessibility over depth. Teams with straightforward testing needs will find it easy to adopt, but those with complex automation requirements or large-scale test suites may find its capabilities limiting compared to more mature platforms.

Repeato

Repeato is a no-code mobile testing tool that uses computer vision to record and replay UI interactions, making it accessible for teams that want test coverage without writing automation code. Its AI layer handles element detection visually rather than relying on element identifiers, which means tests are less likely to break when underlying code changes as long as the UI looks the same.

Its self-healing capabilities automatically update tests when visual changes are detected, reducing the maintenance burden that typically comes with UI test suites on fast-moving mobile products.

Best for: Teams looking for a lightweight, affordable no-code testing option with solid self-healing capabilities, particularly those working on apps with frequent UI updates.

Worth knowing: Repeato's visual approach to element detection is a strength in stable UI environments but can produce false positives when apps undergo significant visual redesigns. It is a capable tool for core flow testing but may not meet the needs of teams requiring deep functional coverage or enterprise-scale test infrastructure.

Mobot

Instead of software-based emulation or virtual device clouds, Mobot uses physical robots to tap, swipe, and interact with real mobile hardware. If you need to test how your app responds to actual hardware buttons, biometric sensors, or physical gestures that emulators simply cannot replicate accurately, Mobot covers ground that no purely software-based tool can.

Its agentic layer manages test execution and reporting, coordinating robotic interactions with real devices and surfacing results in a format engineering teams can act on.

Best for: Teams that need to validate hardware-dependent interactions like Face ID, fingerprint authentication, or physical button behavior that virtual device clouds cannot reliably simulate.

Worth knowing: Mobot is a niche solution and is priced accordingly. For most teams, software-based real device clouds will cover the majority of testing needs at a fraction of the cost. Where Mobot earns its place is in specialized testing scenarios where physical hardware interaction is non-negotiable.

How to Choose an Agentic Mobile Testing Tool

There is no single best agentic mobile testing tool, and no shortage of tools claiming to be one. The right choice depends on what you are testing, how much automation you need, and where your team's biggest gaps are.

A few questions worth asking before you decide:

- How agentic do you actually need it to be? There is a real difference between tools that autonomously generate, execute, and maintain tests and those that simply assist engineers in doing those things faster. Be honest about which one your team needs, because the latter is cheaper and easier to adopt.

- Do you need real device coverage or will emulators do? For most functional testing, a real device cloud like BrowserStack or Sauce Labs covers the bases. For hardware-dependent interactions like biometric authentication, that calculus changes.

- Do you need testing alone or testing plus observability? Most agentic mobile testing tools stop at the release boundary. If you want a single platform that connects pre-release test coverage to post-release production signals, that narrows the field considerably.

Most mobile engineering teams end up combining tools. A real device cloud for broad coverage, purpose-built agentic mobile testing tools for autonomous generation and maintenance, and an observability layer for production. The question is how many separate tools you want to manage and how much overlap you are willing to accept between them.

See What Agentic Mobile Testing and Observability Do Together

Most agentic mobile testing tools stop at the release boundary. You validate before you ship, and then a separate set of tools takes over to monitor what happens in production. That handoff is where issues hide. A test suite that passes cleanly in pre-release can miss the crash that only appears on a specific device under real network conditions, or the performance regression that only surfaces under genuine user load.

Luciq is built to close that gap. As the only platform that combines agentic mobile testing with agentic mobile observability, it gives mobile engineering teams a continuous feedback loop that most testing tools simply cannot provide. Test coverage informs which production signals to watch. Production signals inform where test coverage needs to improve. The two work together rather than in parallel.

Contact us to request a demo and see what agentic mobile testing and observability can do for your team.